Book Appointment Now

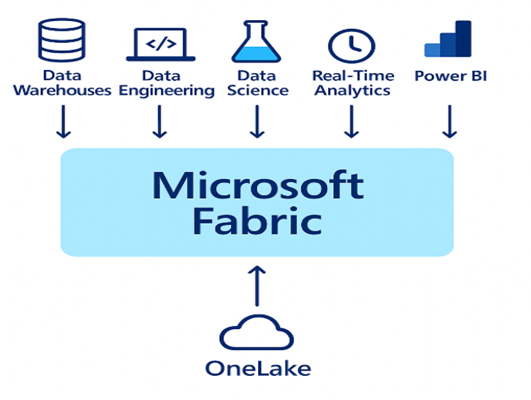

CONNECTING IT ALL WITH MICROSOFT FABRIC

INTRODUCTION

Have you ever tried to build a data pipeline and it felt like you were herding cats? No? Well, to be fair, some cats behave. But if you have ever tried connecting data from different tools, you probably know the feeling. You jump between tools, patch scripts together, and reload data again and again just to reach your final goal.

Now imagine if your entire data pipeline lived in one place. No endless tool-hopping. No messy transfers. Just one environment from start to finish… That is exactly what Microsoft Fabric offers.

Let us dive deeper and see how this platform brings the whole data journey together

WHAT IS MICROSOFT FABRIC?

Microsoft Fabric is an all-in-one data and analytics platform from Microsoft. It combines data movement, data engineering, data science, real-time analytics, and business intelligence in one connected environment. It combines the best features of Azure Synapse, Data Factory, and Power BI into a unified workflow.

With Fabric, you can connect to data sources, clean and prepare the data, build models, and create visual reports, all within the same workspace. Every step flows naturally into the next, allowing teams to work faster and with complete consistency.

At the center of this experience is OneLake, Microsoft’s shared data foundation. Think of it as OneDrive for data, except it speaks the language of analytics. You store a file or a table once, and every team and every workload inside Fabric can use it immediately.

SO, HOW DOES MICROSOFT FABRIC ACTUALLY WORK?

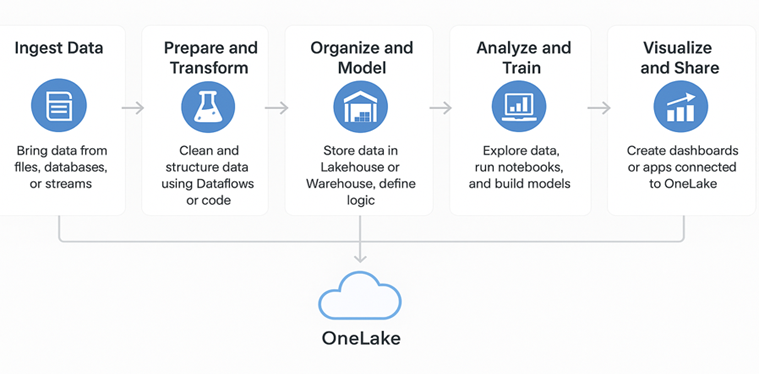

While Fabric can do much more, let us explore its core stages and see how data moves from ingestion to insight in one connected environment.

1. Ingest Data

It all begins with connecting Fabric to your data. You might have files stored in SharePoint or OneDrive, tables sitting in SQL databases, or streaming data coming from apps and sensors.

Fabric offers a collection of built-in tools that help you bring this data into your workspace quickly and in the format you need. Each of these tools has a specific role in how data is collected and prepared for use.

- Copy Job is used to move data from one place to another. It can bring in a full dataset, schedule regular updates, or even react to events automatically.

- Dataflow Gen2 helps you clean and shape the data as it comes in through a simple, visual interface. You can filter, merge, and adjust columns before the data lands in storage.

- Eventstream captures live data that arrives continuously, such as IoT readings or website activity, and sends it straight into Fabric in real time.

No matter the source or method, everything lands in OneLake, the shared foundation that stores all your data in one organized location.

2. Prepare and Transform

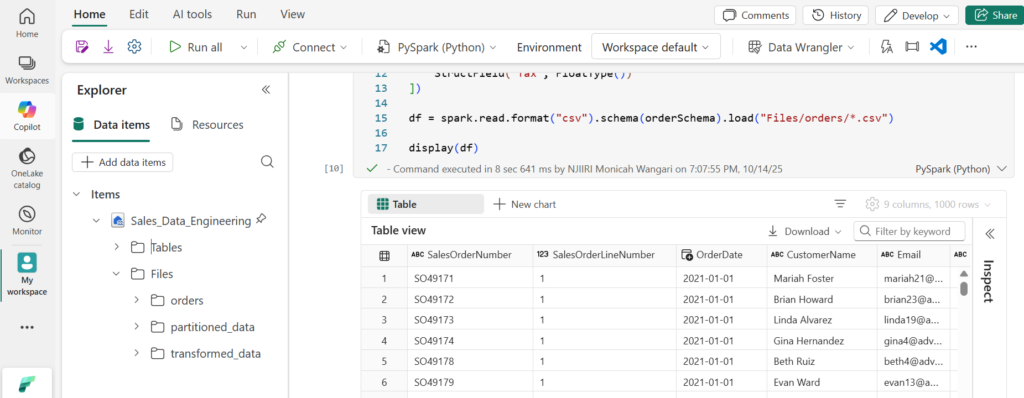

Once data is in OneLake, it needs preparation before analysis. Fabric lets you clean, combine, and organize data either visually through Dataflow Gen2, which works like Power Query for filtering and shaping data, or through notebooks using Python, Spark, or SQL for more advanced transformation. Both methods work directly on data stored in OneLake, keeping it consistent and ready for modeling.

3. Store and Model

After preparation, the cleaned data is stored in structured spaces that support analysis.

- Lakehouse combines the flexibility of data lakes with the performance of data warehouses. It stores large structured and unstructured data while keeping it query ready.

- Data Warehouse is for highly structured, relational data that supports advanced reporting and fast queries.

- Semantic Model lets you define relationships between tables, create calculated fields, and apply business logic that turns raw data into meaningful insights.

These storage layers all connect to the same OneLake foundation, so your data stays consistent across every step.

4. Analyze and Train

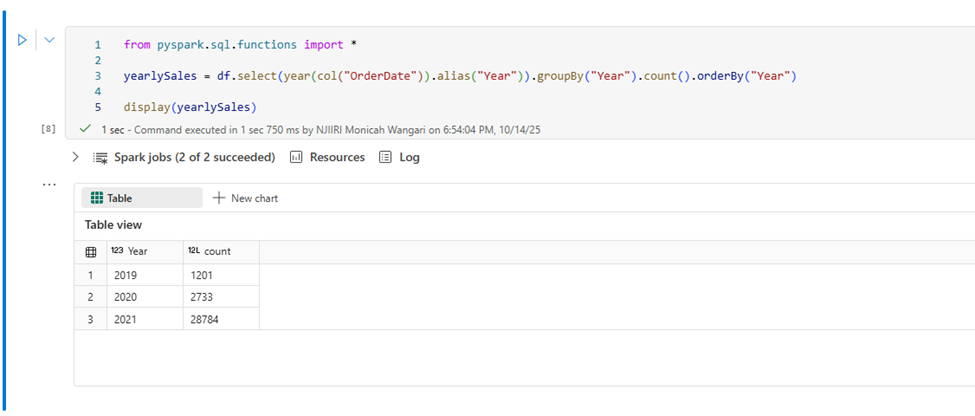

Once your data is organized, Fabric allows you to analyze it or even train predictive models. You can use notebooks to write analysis code, build charts, or create machine learning models. Tools such as Experiment and ML Model make it easier to test ideas and train algorithms without leaving the platform. For real-time insights, Anomaly Detector helps spot unusual patterns as data changes. Data Agent can then take action on those findings or handle other repetitive tasks automatically, keeping your workflows running smoothly.

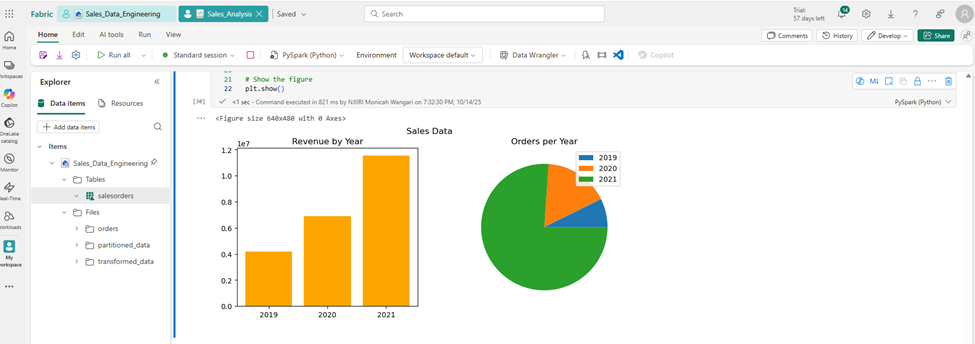

5. Visualize and Share

Once your models and analyses are ready, you can bring everything to life through visuals and applications. Power BI remains the main tool for creating interactive dashboards and reports, but Fabric also connects easily to Excel, notebooks, and custom-built applications. These can include internal dashboards, web apps, or automated systems that use Fabric data and models to deliver real-time insights or trigger specific actions.

WHAT MAKES FABRIC STAND OUT?

Other than its collaborative nature and efficiency, Fabric stands out because of a few key strengths.

- Scalability: Fabric scales automatically through Microsoft’s cloud infrastructure, handling everything from small datasets to enterprise workloads without losing performance.

- Data Security: Fabric integrates with Microsoft Purview, giving full visibility into data access, lineage, and compliance. Built-in encryption and access control keep every dataset in OneLake protected while remaining easy to manage.

- AI Integration: Copilot simplifies work by generating code, insights, and reports through natural language, reducing time spent on repetitive tasks.

- Cost Efficiency: While it is not always cheaper. It becomes more cost-effective when teams use most of its features within one environment, but for smaller projects, separate tools may still cost less.

WRAPPING IT UP

Working with data will probably never stop feeling a little wild. There are always a few tables that refuse to match, a model that won’t refresh, a dashboard that loads only when no one is watching, a query that makes you question your life choices, and a file you swore existed that just doesn’t. But with Fabric, at least the cats stay in one room. It keeps your data, tools, and focus together!

No duplicates, no switching between tools, and no guessing which version is right. You work from one source of truth 😉